HDTV has

the dubious distinction of being the most controversial item of new

technology to be introduced in recent times. Enmeshed in long running

arguments over basic technology, price and market access, HDTV is having

a painful birth worldwide, and the Australian experience differs little

from that overseas. While the focus of the domestic arguments in many

respects differs from the focus of the international arguments, the

common factor is that the standard evokes loud and partisan argument.

Wherein lies the truth ? In this month's feature we will

take a closer look at the basic technology in HDTV, what its likely

consequences will be in the wider market and attempt to shed some light

on the broader issues.

Analogue Television

Analogue TV in its modern form is late 1940s technology,

and in most respects a well entrenched artifact of the industrial age.

When television was introduced, it incorporated the very state of the

art in wireless broadcast and modulation technology.

The video or picture information was presented using an

interlaced raster scan. Synchronisation pulses were attached at the

beginning of every line in the picture, and special lines were used to

synchronise the frames of the picture. The video signal used intensity

modulation, but inverted in polarity.

The modulation technique was also advanced for the

period, using Vestigial SideBand (VSB) Amplitude Modulation. This

technique almost doubled the efficiency of a transmitter, since one

sideband of the modulation envelope and much of the carrier were

removed.

The sound channel used the best technology available for

high quality analogue transmission - Frequency Modulation (FM). With a

high ability to reject interference, FM proved to be an excellent

choice.

Early television was not without its warts. Birth

defects and radiation injuries due to X-ray emissions through the glass

Cathode Ray Tube faces, and very nasty glass shrapnel injuries from tube

implosions both proved to be issues. The modern tube today uses a

toughened glass face, with X-ray absorbent dopants in the glass. By

today's standards an early television receiver was a dangerous

contraption. Reliability was also an issue, since the vacuum tube

technology of the day was only capable of several thousand hours of

operation before the tube cathodes became exhausted. This made early

television expensive to maintain and created a massive industry of tube

jockeys whose principle technical skill lay in guessing which tube to

swap to eliminate a particular symptom.

The US were the first to introduce colour television.

Their NTSC standard used Quadrature Amplitude Modulation (QAM) to encode

two colour difference channels on to a single sub-carrier. The

instantaneous amplitude and phase of the sub-carrier encoded the hue and

saturation of the picture. By demodulating the colour sub-carrier, and

combining the monochrome video signal with the two colour difference

channels, the Red, Green and Blue signals could be reconstituted to

drive the three gun colour tube.

NTSC quickly earned itself the nickname of Never The

Same Colour, since the QAM technique encoded colour hue in the phase

angle of the sub-carrier, and that phase angle could be distorted by the

ugly phase characteristics of the cheap and nasty booster amplifiers,

front end amplifiers and intermediate frequency stages of the day.

The Europeans followed the US with colour. Germany

developed the PAL (Phase Alternating Line) standards, derived from NTSC

but with the addition of a phase reference burst in every sync pulse,

this burst being alternated in phase line by line to compensate for

phase distortion in transmission. The final PAL-B standard was widely

adopted in most of Europe and is the scheme used in Australia. The

French, true to form, decided to go it alone and developed the SECAM

standard which used FM techniques to encode the colour. SECAM was

adopted mostly in French speaking nations, but also became the communist

bloc standard. Today it is mainly used in France and Russia.

Analogue TV has evolved very little since the

introduction of colour. We have seen teletext added, and stereo sound

transmission. Neither represent significant enhancements to the basic

product.

Analogue TV has several serious limitations. The first

is its sensitivity to interference and self-interference via the

multipath propagation of signals, the latter a bigger issue in cluttered

urban environments. The second is its poor resolution, since it is

limited by a fixed modulation scheme which is locked to the picture

scan mechanism. With at best the equivalent to an 800 x 600 interlaced

monitor image, it is hardly an icon of picture quality and does not do

any justice to modern productions made for cinema presentation. With

almost no ability to accommodate further technological growth, analogue

TV is showing its one half century old origins. While the modern TV set

is a technological marvel by the standards of 1950, it falls very much

short of what can be achieved in the digital age. Herein, however, also

lie the roots of much of today's controversy about HDTV. The incessant

chorus of complaints about HDTV costs are firmly centred in comparisons

with an evolved and tired 1950 period technology. Reality check for

HDTV critics: compare the cost of a modern HDTV set to your annual

salary, and compare that to the cost of a television set against annual

salaries in 1955. You might be disappointed !

The DVB and ATSC HDTV standards which are now being

introduced in Europe, Australia and the US, build upon nineties

technologies such as MPEG lossy picture encoding and advanced digital

modulation schemes. They are not the first foray into HDTV, the Japanese

having made an earlier attempt to leapfrog the pack with a satellite

delivered analogue HDTV standard. With prohibitive demands for

bandwidth, analogue HDTV never quite made the grade.

Digital HDTV is clearly the path of the future, since it

provides superior quality, can fit into the tight 6 to 8 MHz TV

broadcast channel bandwidth of established analogue TV, and provides the

power and flexibility of a digital transmission channel and encoding

scheme. To better appreciate the longer term implications of HDTV it is

very useful to explore the basic technology.

HDTV Standards and

Technology Issues

The two principal families of HDTV standards currently

penetrating the marketplace are the US ATSC (Grand Alliance) and the

European DVB standards. The Americans were the first to make a serious

commitment to developing HDTV during the early nineties trade war with

Japan. US TV manufacturers had been almost wiped out by the previous two

decades of competition with the Japanese, who has managed to displace

them from most of the US domestic and export markets. HDTV was seen as

an opportunity to make a new start and also revive the US industrial

base for manufacturing commodity video RAM, DRAM and consumer market RF

components. The Grand Alliance, comprising several US manufacturers and

industry groups, decided to exploit the capabilities of the new MPEG

lossy compression video encoding scheme, which removed the bandwidth

problems which plagued HDTV schemes using analogue encoding or

uncompressed digital video.

Considerable effort and money was expended in the

development of the standard and the design and evaluation of trials

hardware and systems, to identify what were likely to be problem areas

in the technology.

The result of the Grand Alliance effort was the ATSC

(Advanced Television Systems Committee) standards package scheme

(www.sarnoff.org). It combined the use of MPEG-2 digitised video with

the 8-VSB (8 level trellis coded Vestigial SideBand) modulation. The 8

level (3-bit) trellis code is a convolutional forward error control

scheme which was specifically chosen for good resistance to white noise

and thus good system performance with weak HDTV signal. The ATSC scheme

takes the MPEG encoded video and sound, and randomises it with a

scrambler, after which it is encoded into 187 byte packets using

Reed-Solomon (R-S) error control coding with 20 parity bytes. The latter

is used to overcome the limitations of the trellis code in handling

burst noise. The R-S encoded data stream is then interleaved to further

reduce sensitivity to burst noise, and synchronisation sequences are

added. This stream is then fed into the trellis encoder and then the VSB

modulator to produce the signal fro transmission. For cable TV use, a

16-VSB scheme is available which trades noise immunity for better data

rates. A data rate of the order of 20 Mbps is needed for rapidly

changing scenes such as sport or action cinema.

The ATSC standard has at this time been adopted by the

US, and with minor modifications, by Canada, South Korea and the

Phillipines. Japan is fielding its own ISDB standard. Europe and

Australia have opted for the DVB-T (Digital Video Broadcasting/Digital

Versatile Broadcasting - Terrestrial) standard.

The DVB-T scheme is defined by the ETSI EN 300 744

standard (www.etsi.org). It employs a very different approach to

modulation, using a COFDM (Coherent Orthogonal Frequency Division

Multiplexing) scheme, combined also with MPEG-2 encoded video and sound

(ETSI ETR 154). COFDM is derived from researched published in the mid

nineties, and post-dates the early US HDTV research.

Like the ATSC system, the DVB-T system starts with an

MPEG encoded data stream. Packets of 187 bytes are fed into a randomiser

and then a Reed-Solomon encoder, using 16 parity bytes. The R-S coded

data is interleaved. At this level both the DVB-T and ATSC systems

differ little in concept, although many of the parameters are slightly

different. The systems diverge radically at this point. The DVB-T

system uses Quadrature Phase Shift Keying (QPSK), 16 or 64 Quadrature

Amplitude Modulation (16-QAM or 64-QAM) applied to 1512 or 6048

individual carriers. Rather than modulating a single carrier at a very

high data rate, COFDM modulates a very large number of carriers, spaced

at either 1.116 kHz or 4.464 kHz, each with a very slow symbol rate.

The most trivial comparison is that COFDM transmits data in parallel,

against 8-VSB which transmits serially. To aid in receiver

synchronisation, COFDM continually transmits 17 or 68 pilot carriers.

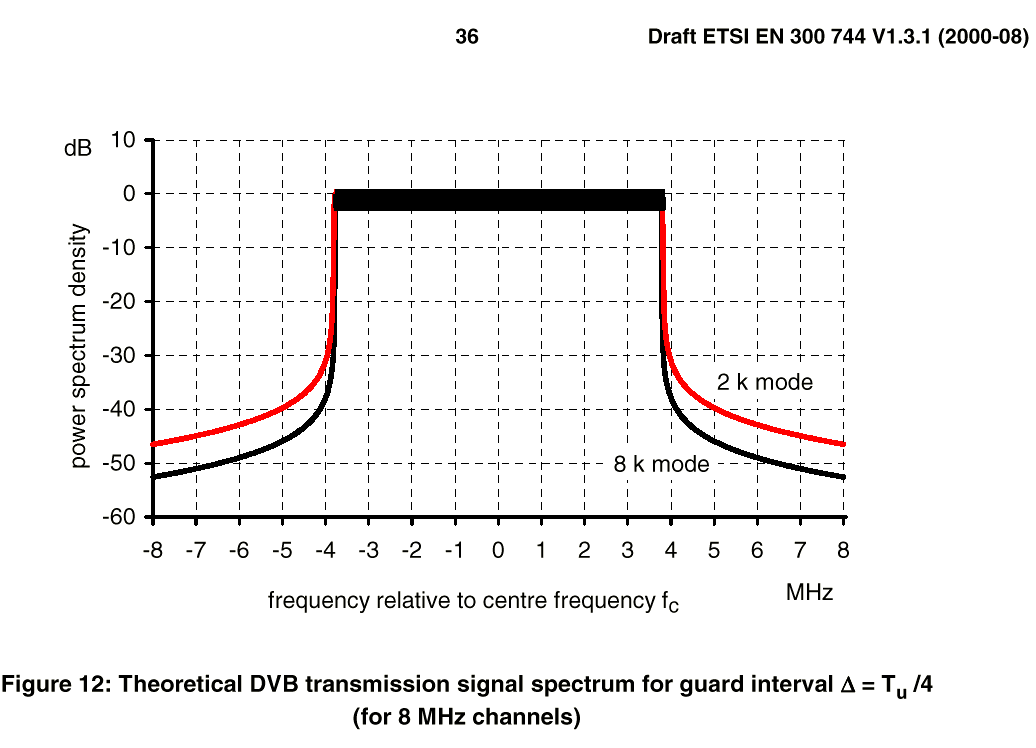

Figure 1 shows the characteristically flat COFDM spectrum (ETSI EN 300

744 (2000-08).

The COFDM scheme has the nice property that established

Fast Fourier Transform algorithms can be used in the demodulator, and

inverse FFT in the modulator. Indeed the DVB-T standard specifies these

as the preferred technique.

There is considerable argument in broadcast engineering

circles as to which of the two standards, 8-VSB ATSC or DVB-T COFDM, is

the better. While the US and European authorities have pretty much cast

themselves into concrete on the issue, loud public arguments are raging

over the export markets for their respective domestic manufacturers.

There are two bones of contention here.

Trial tests and theoretical predictions indicate that

COFDM performs significantly better in the presence of multipath

interference (self-interference by the signal being reflected off large

terrain features or other objects, the symptom of which is heavy

ghosting in analogue TV). At high carrier to noise levels, COFDM

exhibits a 3 dB advantage over 8-VSB in multipath rejection. On the

other hand, where multipath interference is low, of the order of -20 dB,

COFDM demands a carrier to noise ratio typically 4 dB higher than

8-VSB. In the presence of impulse noise interference, COFDM may require

up to 10 dB higher signal levels than 8-VSB to perform properly.

COFDM advocates argue strongly that most TV receivers

are in dense urban areas where multipath is the main problem, whereas

8-VSB advocates point out that cheaper antennas and lower transmitter

power suffice for good 8-VSB reception, compared to COFDM reception. A

city viewer is thus likely to do better with COFDM, whereas a rural

viewer in an area without large hills would probably do better with

8-VSB. The reality is that under signal conditions where good analogue

reception exists, both standards will perform well.

While both sides can score some technical points, the

reality is that the real issue in the debate is that of whose

manufacturers get the first and biggest bite of the market. This style

of acrimonious argument over which way the pie gets carved up differs

little from Australia's HDTV debate.

What Do I Get With

HDTV ?

As interesting as the technical debate may be, it is now

appropriate to explore the consumer side of the equation.

One of the biggest issues which has percolated to the

surface in the HDTV debate, especially in Australia, is that of what do

I get for such an expensive product ? The mass media have not been short

of opinion on this issue, not surprisingly very often precisely

reflecting the commercial agendas of their respective owners.

The big difference we will see with HDTV is a dramatic

improvement in the quality of the picture and the sound presented.

Advocates of HDTV correctly point out that HDTV picture and sound are

cinema quality. Never mind the woeful content !

The first improvement we will see is that digital

transmission eliminates the noise and ghosting artifacts we have put up

with in analogue TV for half a century. This is tremendous leap in

technology, no different from the introduction of error correcting

modems.

The second improvement is that the 3:4 aspect ratio 25

frames/sec picture is replaced with a range of possible formats, and

more flexibility in picture frame rates. One of the objectives of the

Grand Alliance effort which set the context for the whole HDTV

development process was to accommodate the various standard cinema

aspect ratio and frame rate formats, so that the full range of celluloid

stocks could be presented without the nasty problem of frame rate

conversion and cropping to fit the 3:4 aspect ratio tube. A minimal HDTV

requirement is to support a 16:9 aspect ratio picture.

The third improvement is that HDTV uses a frame buffer

scheme and can thus present a very sharp and stable picture, since its

display hardware has more in common with a desktop computer than a

classical analogue TV set.

The fourth improvement is sound, and one not to be

scoffed at. Australian HDTV was to use the Dolby Digital Broadcast (DDB)

scheme (www.dolby.com), A.K.A AC-3 or Dolby-D. The Dolby model is based

upon the idea of adaptively allocating more bits of the transmission

channel to those portions of the signal which are more readily

perceived by the human ear, to achieve a 15:1 compression ratio. The

DDB system can provide for up to 3 forward channels, two rear channels

and a Low Frequency Effects channel to drive a woofer with 3 Hz to 120

Hz signals. A 24-bit dynamic range is supported (cf CD at 14 bits).

The fifth improvement is the potential for significant

greater intelligence in a TV set, due to the use of a wholly digital

platform with a common frame buffer for the screen display. A HDTV set

will be able to double up as a web browsing platform or computer display

simply due to its basic architecture. Many of the proposed interactive

TV/web schemes rely fundamentally upon this idea.

This aspect of HDTV raises other important questions. If

you put a HDTV quality monitor on a PC, fit a HDTV receiver/decoder card

into it, is it a TV or a computer ? Recent experiments performed in the

US involved the transmission of an ATSC encoded MPEG-2 HDTV picture

stream over a TCP/IP channel, layered on top of an optical fibre telco

link. As decent HDTV quality requires only around 20 Mbit/s throughput,

a household wired with a 100 Mbit/s LAN could probably support several

HDTV channels concurrently. Contention over the family room TV ? Banish

the dissenters to their study to watch it on their computer.

The common thread in the domestic and international HDTV

debate has been the issue of cost to consumers. When HDTV was conceived,

one of the background aims was to push large area display technology

into the high volume consumer marketplace to push unit costs down. This

was seen to be pivotal to reducing the costs of high resolution large

area displays for computers. Another background aim was to facilitate

the penetration of TFT LCD displays, and similar low voltage flat panel

display technology, into the volume consumer market. The computer

industry would simply ride on the coat-tails of the consumer boom and

benefit by using HDTV display technology on the desktop.

I for one would be very agreeable with the idea of a 30

inch diagonal 2:1 aspect ratio TFT LCD on my desktop. The ergonomic

gains are considerable.

To date the primary cost driver in HDTV sets has been,

not surprisingly, the display technology. If the set uses a CRT

solution, it will require the same technology as used in top end

graphics monitors which as we all know, are not cheap. If it uses a

plasma or LCD display, the poor production yields and low volumes in the

current computer market also mean that it will not be cheap.

It is a classical chicken and egg problem. The computer

market cannot support the volumes required to drive the cost down to

something appealing to HDTV users. The slow build up in the consumer

HDTV market has made it difficult for many manufacturers to amortise

large display R&D and tool-up costs.

However, once some critical mass in HDTV set sales is

crossed, all of this will begin to change very rapidly, since an

affordable HDTV set also amounts to an affordable large screen computer

monitor.

Other important pieces of technology will also

contribute to the HDTV and computing synergism. DVD technology and high

capacity digital tapes will at some stage be capable of supporting the

required 20 Mbit/s data rate at an affordable cost. Whether the

computing market or the HDTV market drives this technology to that point

first remains to be seen.

What is clear at this stage is that both the HDTV and

computing markets stand to significantly benefit from technology being

developed for either market. Indeed a future device with a high

performance CPU and HDTV display and decoder hardware will be difficult

to accurate label, especially if it uses an industry standard operating

system and can accommodate a mouse, keyboard and network connection.

Perhaps the biggest problem HDTV has is that of

unrealistic expectations. This is true, arguably, of almost every single

group which has contributed to the debate. HDTV has the potential to

benefit consumers and industry, but for that potential to be realised,

all players must contribute their bit. So far this has not been

happening.

![Home - Air Power Australia Website [Click for more ...]](APA/APA-Title-Main.png)

![Sukhoi PAK-FA and Flanker Index Page [Click for more ...]](APA/flanker.png)

![F-35 Joint Strike Fighter Index Page [Click for more ...]](APA/jsf.png)

![Weapons Technology Index Page [Click for more ...]](APA/weps.png)

![News and Media Related Material Index Page [Click for more ...]](APA/media.png)

![Surface to Air Missile Systems / Integrated Air Defence Systems Index Page [Click for more ...]](APA/sams-iads.png)

![Ballistic Missiles and Missile Defence Page [Click for more ...]](APA/msls-bmd.png)

![Air Power and National Military Strategy Index Page [Click for more ...]](APA/strategy.png)

![Military Aviation Historical Topics Index Page [Click for more ...]](APA/history.png)

![Intelligence, Surveillance and Reconnaissance and Network Centric Warfare Index Page [Click for more ...]](APA/isr-ncw.png)

![Information Warfare / Operations and Electronic Warfare Index Page [Click for more ...]](APA/iw.png)

![Systems and Basic Technology Index Page [Click for more ...]](APA/technology.png)

![Related Links Index Page [Click for more ...]](APA/links.png)

![Homepage of Australia's First Online Journal Covering Air Power Issues (ISSN 1832-2433) [Click for more ...]](APA/apa-analyses.png)

![Home - Air Power Australia Website [Click for more ...]](APA/APA-Title-Main.png)

![Sukhoi PAK-FA and Flanker Index Page [Click for more ...]](APA/flanker.png)

![F-35 Joint Strike Fighter Index Page [Click for more ...]](APA/jsf.png)

![Weapons Technology Index Page [Click for more ...]](APA/weps.png)

![News and Media Related Material Index Page [Click for more ...]](APA/media.png)

![Surface to Air Missile Systems / Integrated Air Defence Systems Index Page [Click for more ...]](APA/sams-iads.png)

![Ballistic Missiles and Missile Defence Page [Click for more ...]](APA/msls-bmd.png)

![Air Power and National Military Strategy Index Page [Click for more ...]](APA/strategy.png)

![Military Aviation Historical Topics Index Page [Click for more ...]](APA/history.png)

![Intelligence, Surveillance and Reconnaissance and Network Centric Warfare Index Page [Click for more ...]](APA/isr-ncw.png)

![Information Warfare / Operations and Electronic Warfare Index Page [Click for more ...]](APA/iw.png)

![Systems and Basic Technology Index Page [Click for more ...]](APA/technology.png)

![Related Links Index Page [Click for more ...]](APA/links.png)

![Homepage of Australia's First Online Journal Covering Air Power Issues (ISSN 1832-2433) [Click for more ...]](APA/apa-analyses.png)

![Sukhoi PAK-FA and Flanker Index Page [Click for more ...]](APA/flanker.png)

![F-35 Joint Strike Fighter Index Page [Click for more ...]](APA/jsf.png)

![Weapons Technology Index Page [Click for more ...]](APA/weps.png)

![News and Media Related Material Index Page [Click for more ...]](APA/media.png)

![Surface to Air Missile Systems / Integrated Air Defence Systems Index Page [Click for more ...]](APA/sams-iads.png)

![Ballistic Missiles and Missile Defence Page [Click for more ...]](APA/msls-bmd.png)

![Air Power and National Military Strategy Index Page [Click for more ...]](APA/strategy.png)

![Military Aviation Historical Topics Index Page [Click for more ...]](APA/history.png)

![Information Warfare / Operations and Electronic Warfare Index Page [Click for more ...]](APA/iw.png)

![Systems and Basic Technology Index Page [Click for more ...]](APA/technology.png)

![Related Links Index Page [Click for more ...]](APA/links.png)

![Homepage of Australia's First Online Journal Covering Air Power Issues (ISSN 1832-2433) [Click for more ...]](APA/apa-analyses.png)